🚀 Able to supercharge your AI workflow? Attempt ElevenLabs for AI voice and speech technology!

On this article, you’ll discover ways to consider giant language mannequin functions utilizing RAGAs and G-Eval-based frameworks in a sensible, hands-on workflow.

Matters we are going to cowl embrace:

- Find out how to use RAGAs to measure faithfulness and reply relevancy in retrieval-augmented techniques.

- Find out how to construction analysis datasets and combine them right into a testing pipeline.

- Find out how to apply G-Eval through DeepEval to evaluate qualitative features like coherence.

Let’s get began.

A Fingers-On Information to Testing Brokers with RAGAs and G-Eval

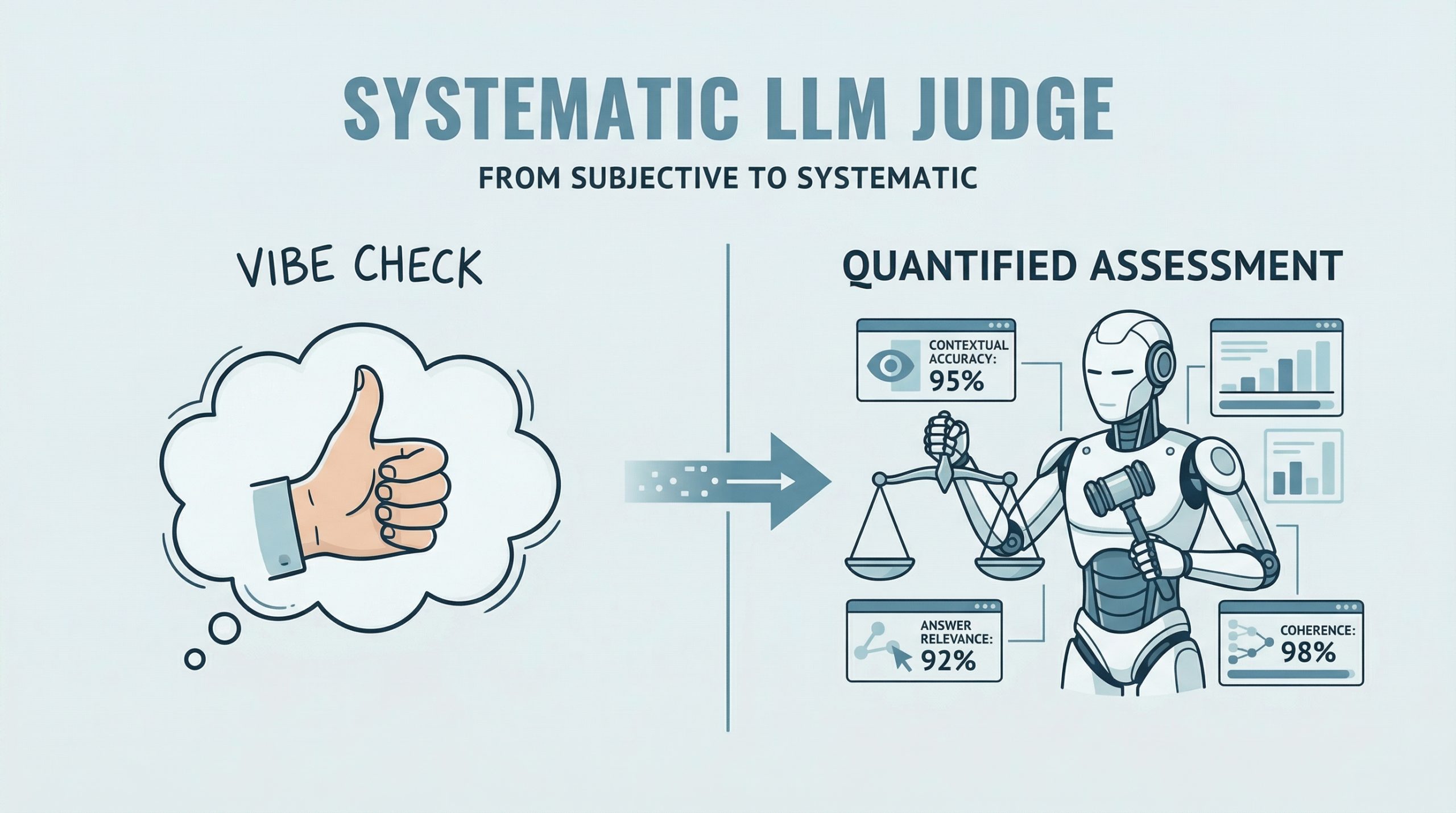

Picture by Editor

Introduction

RAGAs (Retrieval-Augmented Technology Evaluation) is an open-source analysis framework that replaces subjective “vibe checks” with a scientific, LLM-driven “choose” to quantify the standard of RAG pipelines. It assesses a triad of fascinating RAG properties, together with contextual accuracy and reply relevance. RAGAs has additionally advanced to assist not solely RAG architectures but additionally agent-based functions, the place methodologies like G-Eval play a task in defining customized, interpretable analysis standards.

This text presents a hands-on information to understanding how you can check giant language mannequin and agent-based functions utilizing each RAGAs and frameworks based mostly on G-Eval. Concretely, we are going to leverage DeepEval, which integrates a number of analysis metrics right into a unified testing sandbox.

In case you are unfamiliar with analysis frameworks like RAGAs, think about reviewing this associated article first.

Step-by-Step Information

This instance is designed to work each in a standalone Python IDE and in a Google Colab pocket book. You could must pip set up some libraries alongside the best way to resolve potential ModuleNotFoundError points, which happen when trying to import modules that aren’t put in in your setting.

We start by defining a perform that takes a consumer question as enter and interacts with an LLM API (corresponding to OpenAI) to generate a response. It is a simplified agent that encapsulates a fundamental input-response workflow.

|

import openai

def simple_agent(question): # NOTE: this can be a ‘mock’ agent loop # In an actual situation, you’d use a system immediate to outline instrument utilization immediate = f“You’re a useful assistant. Reply the consumer question: {question}”

# Instance utilizing OpenAI (this may be swapped for Gemini or one other supplier) response = openai.chat.completions.create( mannequin=“gpt-3.5-turbo”, messages=[{“role”: “user”, “content”: prompt}] ) return response.selections[0].message.content material |

In a extra real looking manufacturing setting, the agent outlined above would come with extra capabilities corresponding to reasoning, planning, and power execution. Nonetheless, because the focus right here is on analysis, we deliberately maintain the implementation easy.

Subsequent, we introduce RAGAs. The next code demonstrates how you can consider a question-answering situation utilizing the faithfulness metric, which measures how effectively the generated reply aligns with the offered context.

|

from ragas import consider from ragas.metrics import faithfulness

# Defining a easy testing dataset for a question-answering situation knowledge = { “query”: [“What is the capital of Japan?”], “reply”: [“Tokyo is the capital.”], “contexts”: [[“Japan is a country in Asia. Its capital is Tokyo.”]] }

# Operating RAGAs analysis consequence = consider(knowledge, metrics=[faithfulness]) |

Notice that you could be want ample API quota (e.g., OpenAI or Gemini) to run these examples, which usually requires a paid account.

Under is a extra elaborate instance that includes a further metric for reply relevancy and makes use of a structured dataset.

|

test_cases = [ { “question”: “How do I reset my password?”, “answer”: “Go to settings and click ‘forgot password’. An email will be sent.”, “contexts”: [“Users can reset passwords via the Settings > Security menu.”], “ground_truth”: “Navigate to Settings, then Safety, and choose Forgot Password.” } ] |

Make sure that your API key’s configured earlier than continuing. First, we reveal analysis with out wrapping the logic in an agent:

|

import os from ragas import consider from ragas.metrics import faithfulness, answer_relevancy from datasets import Dataset

# IMPORTANT: Change “YOUR_API_KEY” together with your precise API key os.environ[“OPENAI_API_KEY”] = “YOUR_API_KEY”

# Convert listing to Hugging Face Dataset (required by RAGAs) dataset = Dataset.from_list(test_cases)

# Run analysis ragas_results = consider(dataset, metrics=[faithfulness, answer_relevancy]) print(f“RAGAs Faithfulness Rating: {ragas_results[‘faithfulness’]}”) |

To simulate an agent-based workflow, we are able to encapsulate the analysis logic right into a reusable perform:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

import os from ragas import consider from ragas.metrics import faithfulness, answer_relevancy from datasets import Dataset

def evaluate_ragas_agent(test_cases, openai_api_key=“YOUR_API_KEY”): “”“Simulates a easy AI agent that performs RAGAs analysis.”“”

os.environ[“OPENAI_API_KEY”] = openai_api_key

# Convert check circumstances right into a Dataset object dataset = Dataset.from_list(test_cases)

# Run analysis ragas_results = consider(dataset, metrics=[faithfulness, answer_relevancy])

return ragas_results |

The Hugging Face Dataset object is designed to effectively signify structured knowledge for giant language mannequin analysis and inference.

The next code demonstrates how you can name the analysis perform:

|

my_openai_key = “YOUR_API_KEY” # Change together with your precise API key

if ‘test_cases’ in globals(): evaluation_output = evaluate_ragas_agent(test_cases, openai_api_key=my_openai_key) print(“RAGAs Analysis Outcomes:”) print(evaluation_output) else: print(“Please outline the ‘test_cases’ variable first. Instance:”) print(“test_cases = [{ ‘question’: ‘…’, ‘answer’: ‘…’, ‘contexts’: […], ‘ground_truth’: ‘…’ }]”) |

We now introduce DeepEval, which acts as a qualitative analysis layer utilizing a reasoning-and-scoring method. That is notably helpful for assessing attributes corresponding to coherence, readability, and professionalism.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

from deepeval.metrics import GEval from deepeval.test_case import LLMTestCase, LLMTestCaseParams

# STEP 1: Outline a customized analysis metric coherence_metric = GEval( identify=“Coherence”, standards=“Decide if the reply is simple to observe and logically structured.”, evaluation_params=[LLMTestCaseParams.INPUT, LLMTestCaseParams.ACTUAL_OUTPUT], threshold=0.7 # Go/fail threshold )

# STEP 2: Create a check case case = LLMTestCase( enter=test_cases[0][“question”], actual_output=test_cases[0][“answer”] )

# STEP 3: Run analysis coherence_metric.measure(case) print(f“G-Eval Rating: {coherence_metric.rating}”) print(f“Reasoning: {coherence_metric.motive}”) |

A fast recap of the important thing steps:

- Outline a customized metric utilizing pure language standards and a threshold between 0 and 1.

- Create an

LLMTestCaseutilizing your check knowledge. - Execute analysis utilizing the

measuremethodology.

Abstract

This text demonstrated how you can consider giant language mannequin and retrieval-augmented functions utilizing RAGAs and G-Eval-based frameworks. By combining structured metrics (faithfulness and relevancy) with qualitative analysis (coherence), you’ll be able to construct a extra complete and dependable analysis pipeline for contemporary AI techniques.

🔥 Need the very best instruments for AI advertising? Take a look at GetResponse AI-powered automation to spice up your enterprise!